Table of Contents

Executive Summary

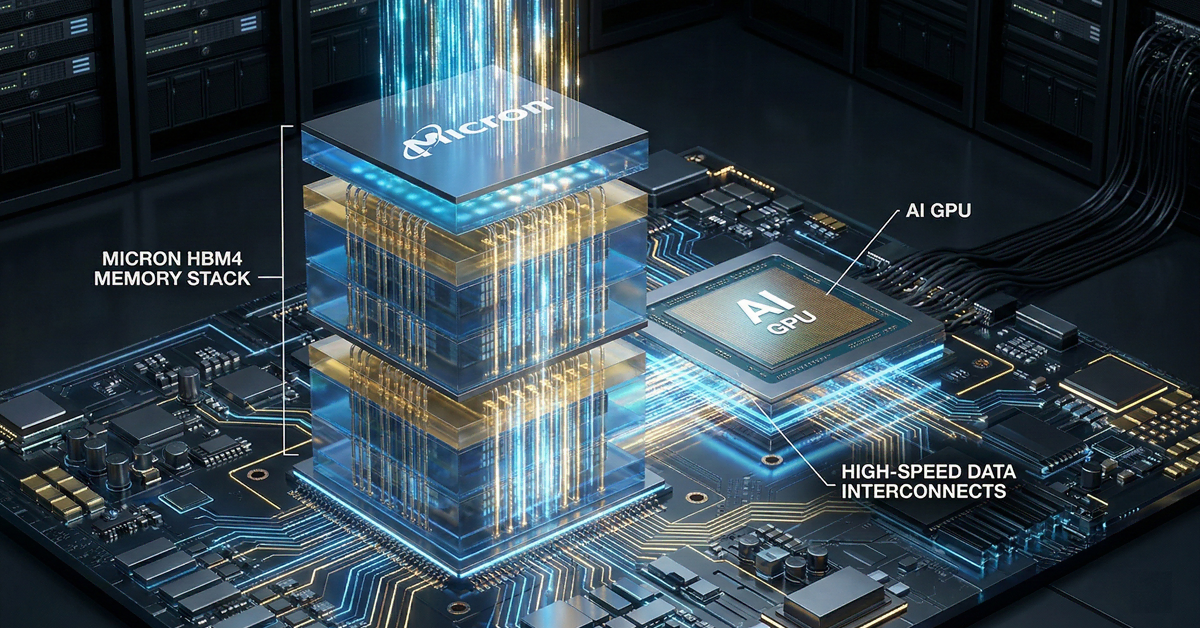

Micron Technology (NASDAQ: MU) has undergone a fundamental transformation, evolving from a traditional “fast follower” in the memory industry to a primary pioneer of the High-Bandwidth Memory (HBM) revolution. As of March 2026, the company stands at a critical inflection point. Having successfully captured significant market share with its HBM3E solutions, Micron is now aggressively positioning itself for the HBM4 cycle. With its entire HBM production capacity sold out through the end of 2026, the investment thesis for Micron has shifted from a cyclical recovery play to a structural growth story centered on AI infrastructure.

The transition to HBM4 represents more than just a generational update; it is an architectural shift that integrates memory more deeply with logic than ever before. Micron’s reliance on its advanced 1-beta and upcoming 1-gamma DRAM nodes provides a distinct power-performance-area (PPA) advantage over its primary rivals, SK Hynix and Samsung. However, the path to market parity is not without obstacles. While Micron has overtaken Samsung to claim approximately 21% of the HBM market, the ultimate ceiling for its growth may be determined by third-party packaging constraints, specifically at TSMC, rather than its own internal wafer capacity. This report provides a deep dive into the technical, financial, and strategic factors that will define Micron’s trajectory through the HBM4 era.

The HBM Landscape: From Scarcity to Structural Dominance

The global demand for AI accelerators, led by NVIDIA’s Blackwell and upcoming Rubin architectures, has created an unprecedented shortage of high-performance memory. In this environment, HBM has become the “oxygen” of the data center. For the first time in the history of the semiconductor industry, memory is no longer a commoditized after-thought but the primary bottleneck for AI training and inference at scale.

Micron’s management has confirmed that its HBM supply for both 2025 and 2026 is fully committed. This “sold-out” status provides a level of revenue visibility rarely seen in the memory sector. Traditionally, memory prices fluctuate wildly based on quarterly supply-demand imbalances. By securing multi-year volume and pricing agreements with hyperscalers and GPU designers, Micron is effectively “de-cyclicalizing” a portion of its business. This shift is reflected in the company’s record-breaking fiscal performance, with gross margins trending toward 60% and revenue reaching historic highs.

Technical Deep Dive: The 1-beta and 1-gamma Advantage

The core of Micron’s competitive edge lies in its front-end manufacturing excellence. While rivals have historically relied on earlier adoption of Extreme Ultraviolet (EUV) lithography, Micron perfected its multi-patterning techniques on the 1-beta (1β) node, achieving industry-leading bit density and power efficiency without the immediate complexities of EUV. This technical discipline allowed Micron to ramp HBM3E faster and with better yields than many expected.

The 1-beta Node: The Foundation of Current Success

Micron’s HBM3E and its initial HBM4 samples are built on the 1-beta node. This technology utilizes advanced CMOS innovations to deliver significant gains in performance-per-watt. In the context of HBM4, the 1-beta node allows for a 12-high and 16-high stack architecture that maintains a manageable thermal profile. Thermal management is the “silent killer” of HBM performance; as stacks get taller and densities increase, the heat generated can lead to throttling. Micron’s 1-beta process offers roughly 30% better power efficiency compared to competitor offerings on equivalent generations, a metric that is highly valued by data center operators looking to minimize Total Cost of Ownership (TCO).

The 1-gamma Node: EUV Integration and the Future of HBM4

As the industry moves deeper into the HBM4 cycle, Micron is transitioning to its 1-gamma (1γ) node. This represents the company’s first high-volume implementation of EUV lithography. The 1-gamma node is projected to provide:

- 30% Improvement in Bit Density: Allowing for higher capacity memory stacks (up to 48GB and 64GB per stack) within the same physical footprint.

- 20% Reduction in Power Consumption: Essential for the next generation of 2000W+ AI clusters.

- Enhanced Performance Scaling: Enabling pin speeds that exceed 11.5 Gbps, supporting the massive bandwidth requirements of the NVIDIA Rubin platform.

The successful ramp of 1-gamma is critical. If Micron can maintain its yield leadership during this transition, it will likely sustain its margin advantage over Samsung, which has historically struggled with yield consistency on its advanced nodes.

HBM4: The Architectural Revolution

HBM4 is not a mere evolution of HBM3E; it is a fundamental redesign of the memory-to-logic interface. The most significant change is the doubling of the interface width from 1024 bits to 2048 bits. This change is necessitated by the “Memory Wall”—the growing gap between the processing power of GPUs and the speed at which data can be fed into them.

Custom Logic Base Dies

In previous HBM generations, the “base die” (the logic layer at the bottom of the memory stack) was produced by the memory manufacturer themselves. With HBM4, the base die is becoming a custom piece of silicon, often manufactured on advanced logic nodes (such as TSMC’s 5nm or 12nm) to allow for tighter integration with the GPU. Micron has established a “foundry-direct” model, collaborating closely with TSMC to ensure its HBM4 stacks are optimized for the CoWoS (Chip-on-Wafer-on-Substrate) packaging process. This collaboration is a strategic masterstroke, as it aligns Micron with the world’s most advanced logic foundry, effectively neutralizing the vertical integration advantage that Samsung (which has its own foundry) once claimed.

Performance Benchmarks

Micron’s HBM4 is targeted to deliver bandwidth exceeding 2.0 TB/s per stack. This is a 60% increase over the current HBM3E standard. For an AI server equipped with eight HBM4 stacks, the total aggregate bandwidth could exceed 16 TB/s. This level of performance is required to enable “Chain-of-Thought” reasoning and real-time multi-modal inference in the next generation of Large Language Models (LLMs).

Market Share Dynamics: The Path to Parity

A central question for investors is whether Micron can bridge the gap with SK Hynix. Currently, SK Hynix maintains a dominant ~60% market share, largely due to its early mover advantage and “First Source” status with NVIDIA. Micron, however, has rapidly climbed to ~21%, displacing Samsung as the clear “Second Source” and potential “Co-Leader.”

Can Micron Reach Parity?

The argument for Micron reaching 30-35% market share (near-parity in a three-player market) rests on three pillars:

- Samsung’s Qualification Struggles: Samsung has repeatedly faced delays in qualifying its 12-high HBM3E parts with NVIDIA. Every quarter that Samsung remains in the “qualification wilderness” is an opportunity for Micron to seize more allocation.

- Customer Diversification: While NVIDIA is the primary driver, Micron is seeing heavy demand from AMD (for the MI400 series) and hyperscalers like Google and AWS, who are designing their own custom AI ASICs. These customers are eager to diversify their supply chains away from a single dominant provider.

- Capacity Expansion: Micron is pursuing a “brownfield” strategy, such as the acquisition of the PSMC P5 fab in Taiwan, which allows it to bring cleanroom capacity online faster than building from scratch. This helps overcome the physical constraints of wafer production.

However, reaching parity with SK Hynix is a high bar. Hynix has spent a decade refining its Mass Reflow Molded Underfill (MR-MUF) technology, which offers superior thermal dissipation. Micron utilizes a different packaging technique (Advanced Thermal Compression Non-Conductive Film), and while this has proven successful for HBM3E, the HBM4 era will be a true test of which packaging philosophy scales better.

The TSMC Bottleneck: The Real Ceiling

The most significant risk to Micron’s market share aspirations is not its own ability to produce wafers, but the industry’s ability to package them. HBM must be integrated with the GPU using advanced packaging techniques like CoWoS. TSMC currently controls the vast majority of this capacity.

The “Memory Wall” is being replaced by the “Packaging Wall.” Even if Micron produces enough HBM4 wafers to claim 40% of the market, if there is only enough CoWoS capacity to support 20% of those wafers, Micron’s revenue will be capped.

TSMC is aggressively expanding its CoWoS capacity, but demand continues to outstrip supply. Micron’s reliance on TSMC (and other OSATs like Amkor) means it is part of a complex ecosystem where it does not control the final assembly. In contrast, Samsung’s “all-in-one” strategy (Memory + Foundry + Packaging) is designed to solve this exact problem. While Samsung has struggled with execution thus far, if they ever solve their yield issues, their ability to offer a “turnkey” solution could limit Micron’s ability to gain further share.

Financial Trajectory: HBM as a Percentage of DRAM

For investors, the most important metric to watch is HBM revenue as a percentage of total DRAM revenue. Historically, this was in the low single digits. In early 2025, it trended toward 10-15%. As we move through 2026, Micron is on a path where HBM could represent 20% to 25% of its total DRAM revenue.

Impact on Margins

The financial implications of this shift are profound. HBM requires approximately three times the wafer capacity of standard DDR5 to produce the same number of bits. This “wafer cannibalization” naturally constrains the supply of commodity DRAM, leading to higher prices across the board. Furthermore, HBM is a high-margin, value-added product. The transition from selling commodity “bits” to selling complex “memory systems” (HBM stacks) is the primary driver of Micron’s gross margin expansion from the 20% range to over 50%.

Capital Expenditure (CapEx)

Maintaining this leadership requires massive investment. Micron has signaled fiscal 2026 CapEx of approximately $20 billion. This capital is being deployed into EUV tools, HBM stacking equipment, and new fab construction in Idaho and New York. While this level of spending is eye-watering, it is backed by long-term contracts. The “Return on Invested Capital” (ROIC) for HBM remains significantly higher than that of traditional DRAM cycles, justifying the aggressive spend.

Competitive Matrix: 2026 HBM4 Outlook

| Metric | Micron (MU) | SK Hynix | Samsung |

|---|---|---|---|

| Current HBM Share | ~21% | ~60% | ~17% |

| HBM4 Node | 1-beta / 1-gamma | 1-beta (10nm) | 1-alpha / 1-beta |

| Packaging Tech | Advanced TCNCF | MR-MUF | TC-NCF / Hybrid Bonding |

| 2026 Supply Status | 100% Sold Out | 100% Sold Out | Partially Committed |

| Logic Partnership | TSMC Lead Partner | TSMC Lead Partner | Internal Foundry |

Risk Factors and Challenges

Despite the bullish outlook, investors must consider several risk factors that could derail the Micron thesis:

- Yield Volatility on 1-gamma: Any delay in the 1-gamma ramp or lower-than-expected yields from EUV could impact Micron’s ability to meet its sold-out commitments.

- The “AI Bubble” Question: If hyperscale CapEx slows down in 2027, the industry could face a sudden oversupply of HBM, leading to a traditional memory crash. However, the current “sold-out” contracts mitigate this risk in the near term.

- Geopolitical Risks: With significant manufacturing in Taiwan and China, and massive new fab projects in the U.S., Micron is at the center of the global “chip war.” Trade restrictions or regional instability could disrupt its supply chain.

- Samsung’s Potential Comeback: Samsung is a well-capitalized giant. If they successfully qualify their HBM4 with NVIDIA earlier than expected, they could use their massive scale to engage in a price war to regain share.

The Path Forward: SEC’s “Rubin” and Beyond

The upcoming 2026-2027 period will be dominated by the ramp of NVIDIA’s Rubin platform, which will be the first to utilize HBM4 at scale. Micron’s success in this generation will depend on its ability to execute on the “12-high” and “16-high” stacking requirements. Furthermore, the industry is already looking toward “Hybrid Bonding” for HBM5, a technology that eliminates the traditional bumps between chips for even better density and thermal performance. Micron’s R&D investment in hybrid bonding will be the next major technical milestone for investors to monitor.

Investor Conclusion

Micron Technology has successfully navigated the most difficult transition in its history. By moving from a follower to a leader in the HBM space, it has positioned itself as an essential partner to the AI industry. The transition to HBM4 is the next battlefield. With a superior power-efficiency profile driven by the 1-beta and 1-gamma nodes, and a strategic alliance with TSMC, Micron is well-positioned to increase its market share toward 30%.

The investor question regarding “parity” with SK Hynix remains open, but the financial reality is that even at 25% share, Micron is poised for record earnings. The “ceiling” is currently dictated by industry-wide packaging constraints, which acts as a stabilizer for pricing. For investors, Micron represents a high-conviction play on the physical layer of the AI revolution. As HBM revenue continues to grow as a percentage of the total DRAM mix, the company’s valuation should continue to reflect its shift from a commodity maker to a specialized high-tech system provider.

Metric Checklist for 2026

- HBM Revenue Mix: Target 25% of total DRAM revenue by end of 2026.

- 1-gamma Yields: Monitor quarterly updates for mentions of “record ramp” or “yield improvement.”

- TSMC Allocation: Any news regarding increased CoWoS capacity for Micron’s HBM4 stacks is a major catalyst.

- Customer Win Announcements: Watch for formal HBM4 qualification from NVIDIA for the Rubin platform.

Micron is no longer playing catch-up; it is setting the pace. For the patient investor, the HBM4 era offers a rare combination of high visibility, margin expansion, and structural growth.